Roger Binns — Wed 09 April 2014

TLDR: Panasonic use an outsourced company for support that is beyond

comical. This includes only being able to provide answers they

already have, surveys that can't be completed, and general behaviour

indistinguishable from gross indifference.

If find it fascinating what companies do these days. They hate their

users so much that third parties are hired to deal with them. And you

can bet those third parties are used because they are cheap.

Most of them seem to operate by having 10 or so common answers, and

pattern match your question to the closest answer, hoping to close the

incident as quickly as possible.

Many software companies still think that it’s “economical” to run

tech support in Bangalore or the Philippines, or to outsource it to

another company altogether. Yes, the cost of a single incident

might be $10 instead of $50, but you’re going to have to pay $10

again and again.

—Seven steps to remarkable customer service

This is extremely short term thinking. The best customer service is

one you don't have to contact because things just work. And when

there are calls, actually address the issue so that more calls do not

come in. And use this to make your future products better. Better

products result in better sales in a good feedback loop. Worse

products and blowing your customer interactions is a way to bleed away

customer loyalty and future product purchases. Often that forces

competing on price due to the lack of other virtues, and isn't

particularly profitable or good for the long term.

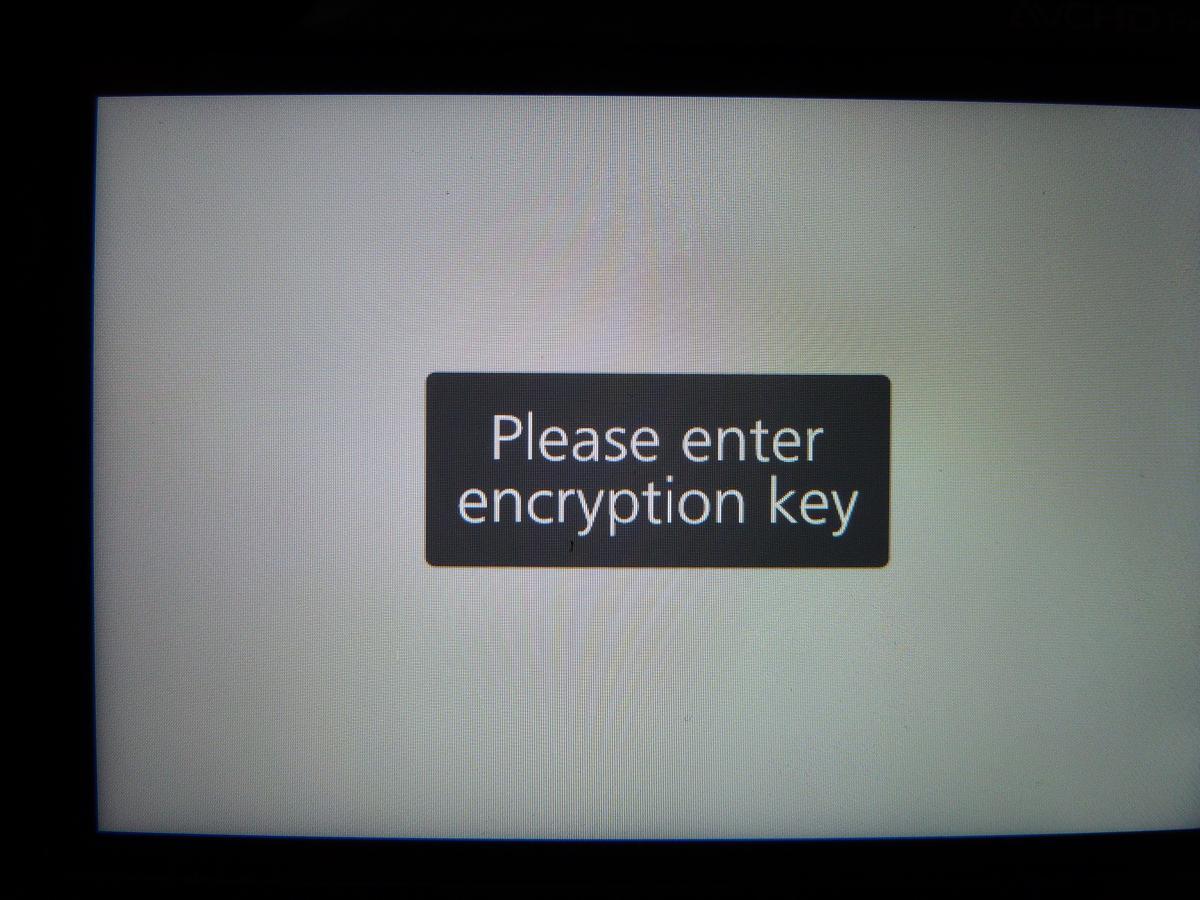

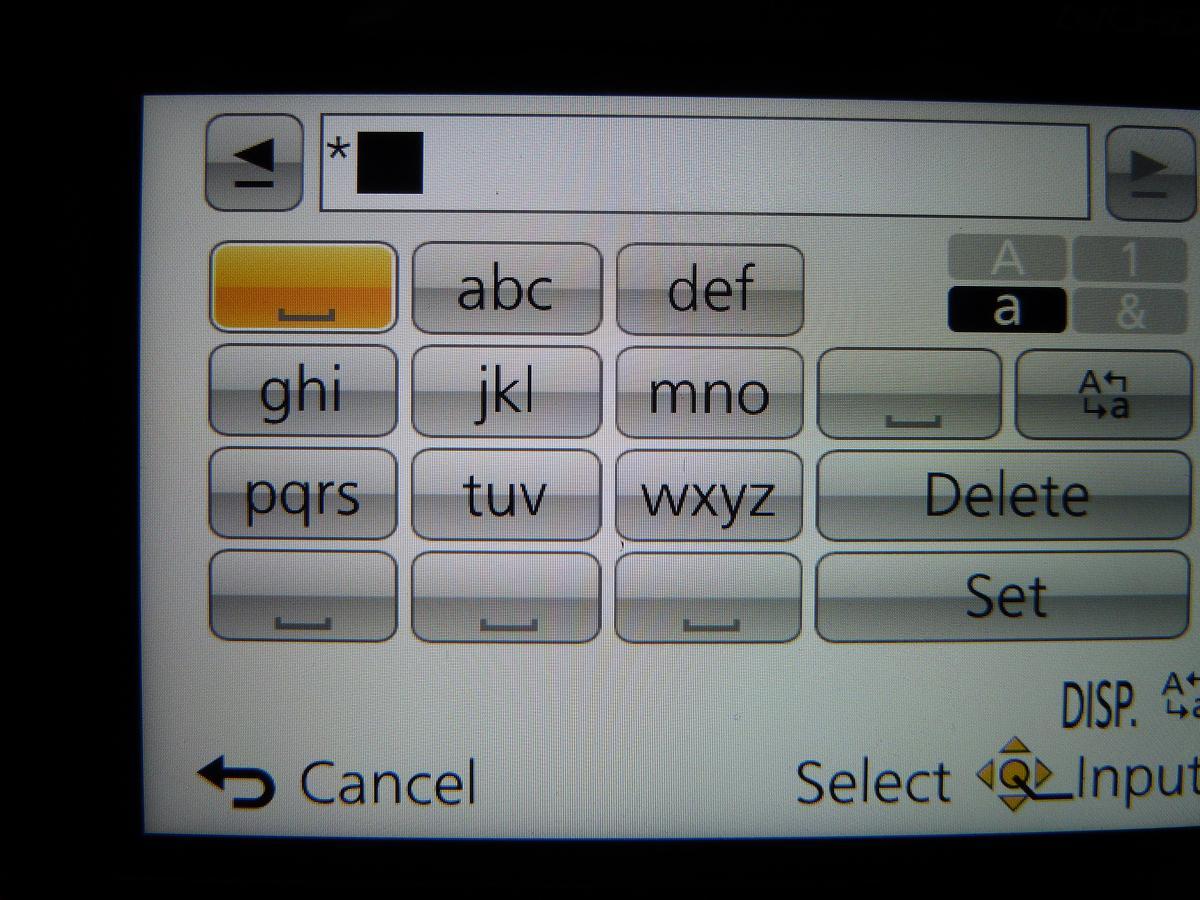

Panasonic makes many consumer goods, amongst them cameras. I bought a

DMC-ZS40 which

includes wifi functionality. When connecting to a wifi access

point it won't let you enter a space in the password.

Spaces are perfectly acceptable in wifi passwords. Heck all the

domestic access points I have access to use them! Now you know and I

know that this is a bug . But rather than being presumptuous I

contacted Panasonic support to ask how I enter a space in wifi

passwords. Maybe there is some other way in just this case?

I started with online chat. It quickly became clear that the customer

service staff do not have access to the cameras, nor had they ever

used that model or its similar predecessor. I pointed to the screen

shot on page 75 of the manual

(warning: PDF) and explained that the spaces worked elsewhere, just

not for wifi passwords. I had to repeat this over and over again - I

can enter spaces, just not for wifi passwords.

The rep tried everything but didn't really grasp what was going on.

And short of some secret setting there is nothing they could do, other

than take a lot of time to not address the issue. Eventually they

told me to call phone support.

It took 5 phone calls. The calls are answered by an IVR (voice)

system that asks which product, then if they recognise right what you

want (eg support, buy accessories) and then in support if you want to

hear common tips before finally connecting you to a person. Actually

just before the human connection they ask if they can call you back

for a survey . At no point can you press buttons - you can only

proceed by speaking. (You also can't go back.)

#1 I describe the issue to a person who immediately hangs up. My

guess is wifi related issues are longer calls and make their stats

look worse.

#2 It decided that I was talking about a TV, and then connected me

to a number saying it was out of service permanently.

#3 The rep never spoke and I could hear some background talking

for about 10 seconds before it got quiet. After trying various things

to get attention I hung up.

#4 It decided I wanted to buy parts and insisted on taking me down

that road. Yes I did end up swearing at the idiocy.

#5 I got through to a person and did pretty much the same as with

the chat person. (Yes they too had no experience/access to the camera

or seen its keyboard.) We did the page 75 of the manual thing plus

fruitless attempts to enter the space. After 28 minutes they decided

that a higher level support person needs to get back to me. I'm not

sure if it ever sunk in that I was perfectly able to enter spaces --

just not for wifi passwords.

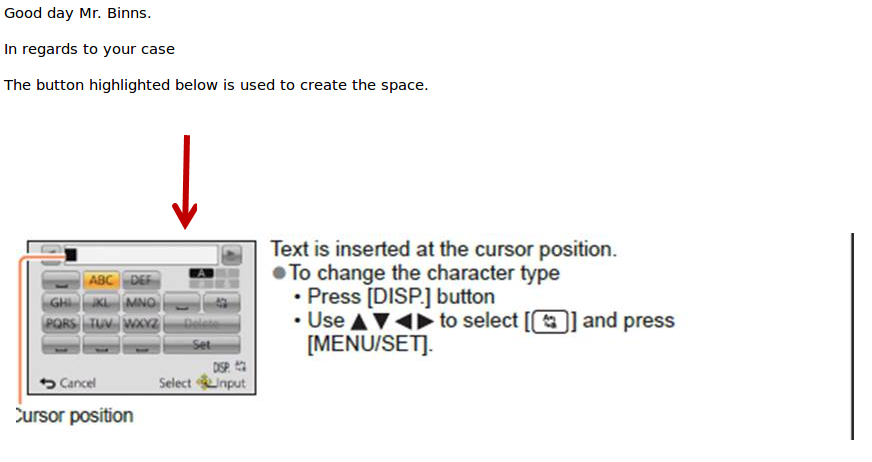

That is cut straight from page 75 of the manual - the same one I kept

pointing them to. I am completely mystified as to what the red arrow

is pointing to.

Needless to say this has cost Panasonic money and is going to cost

them even more as I persevere to get the issue addressed. It didn't

have to be this way.

Update: I then tried to use the "email" option where you enter

details in a web form and will get a response within two business

days. This was so I could link to screenshots to show the problem.

I still hadn't got a response 10 days later.

I did another chat where they acknowledged the issue but said there

was absolutely nothing that could be done. They refused to actually

tell Panasonic about the issue. Insisted I call because they can't

take personal information in chats. I pointed out I didn't need to be

contacted, they already took my information to initiate the chat, and

it is Panasonic who need to be contacted on the issue. The chat

transcript they emailed also included a survey link. Clicking the

link just resulted in a redirect to the Panasonic US home page.

Figuring that nothing would happen, I did phone call too. After some

back and forth, including giving an imgur url verbally they finally

came back and told me you can't enter spaces in a wifi password. I

had to remind them that was exactly what I had told them. Then I was

told the camera was set in stone and could not possibly be changed. I

pointed out the firmware version was 1.0 and my previous model had

firmware updates, so it was perfectly possible. Eventually the rep

said the information would be passed on to Panasonic (yeah right). I

got the usual phone call survey afterwards where a few questions in it

wouldn't recognise answers.

I am befuddled as to why Accent/Panasonic aren't noticing a lack of

survey responses. It is in Accent's interest that negative ones don't

get through.

Category: misc

– Tags:

rant, panasonic